Don't Send Your AI to College When It Just Needs a Handbook

Demystifying the "Learning" Illusion for Business Leaders

I recently watched a business leader get visibly frustrated with a chatbot.

They had spent 20 minutes the previous day “teaching” the AI about a specific project code. Today, they asked about it again, and the AI hallucinated an answer.

“But I taught it!” they said. “Why isn’t it learning?”

To them, the AI was a new employee who wasn’t paying attention. To me, the AI was a calculator that had been cleared.

This is the Learning Illusion.

In the human world, “learning” is a single concept: you hear a fact, your brain wires new connections, and you know it forever. In the AI world, “learning” is a suitcase word packed with four completely different technologies, each with different costs, risks, and retention spans.

If you don’t understand the difference, you will spend millions trying to “train” a model when all you really needed to do was upload a PDF.

Here is your guide to the four ways a “Digital Employee” actually learns.

1. The PhD: Model Training (Machine Learning)

The Analogy: Sending your employee to University for 4 years.

When people say “Machine Learning,” this is usually what they mean. It involves showing a neural network billions of examples until it understands patterns. This creates the “Base Model” (like GPT-4 or Claude).

What it learns: General reasoning, languages (Python, French), and how the world works.

The Cost: Astronomical. Millions of dollars and months of time.

The Trap: Executives often think, “Our pricing changed, so we need to retrain the model.” No, you don’t. You wouldn’t send an employee back to get a second PhD just because the price of widgets went up. You would just hand them a price sheet.

When to use it: Only when you need the AI to learn a completely new skill (like a proprietary coding language) that it has never seen before.

2. The Handbook: RAG (Retrieval Augmented Generation)

The Analogy: Giving your employee a Company Wiki and an Open-Book Exam.

If training is the PhD, this is the day-to-day reality. We don’t change the AI’s brain; we just give it access to a library.

When you ask a question, the AI runs a search, finds the relevant document (the “Handbook”), reads it, and answers you. This is called RAG.

What it learns: Facts, figures, policies, and yesterday’s meeting notes.

The Cost: Cheap and instant.

The Magic: If your pricing changes, you don’t call a data scientist. You just update the PDF in the folder. The AI “learns” the new price instantly because it looks it up every time.

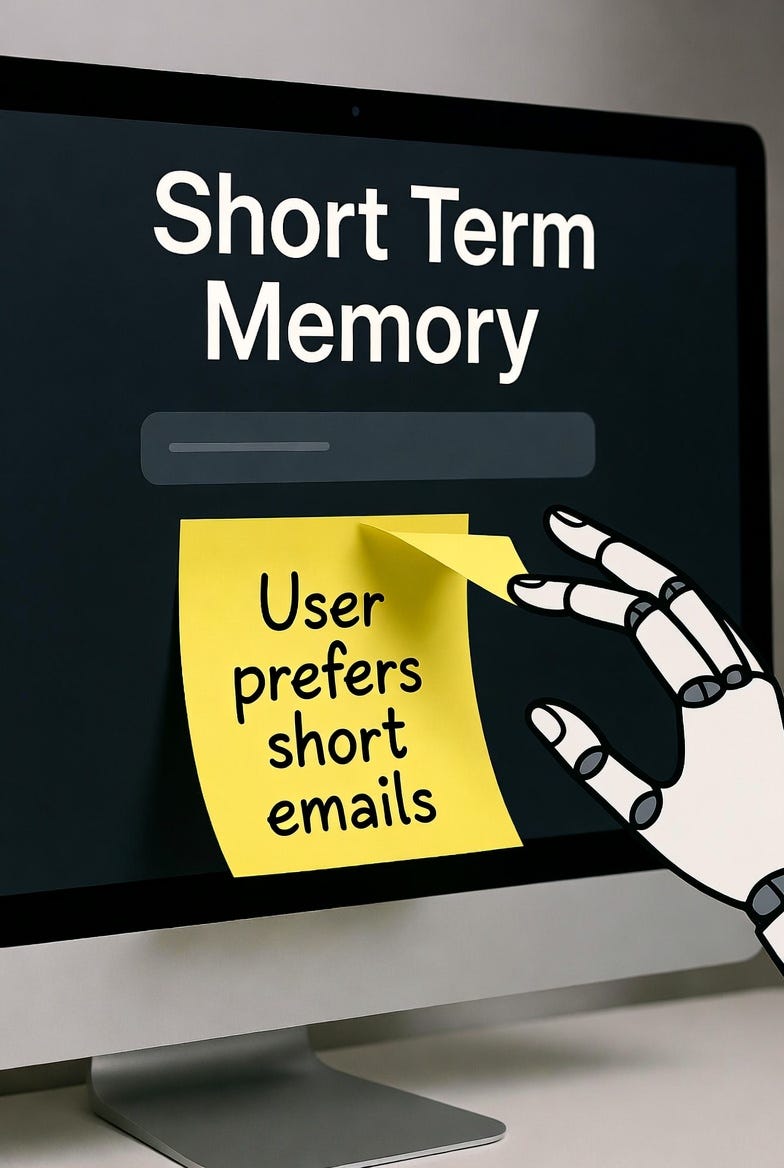

3. The Sticky Note: Memory (Context)

The Analogy: A notepad on the desk that gets shredded at night.

This is where the “I taught it yesterday” frustration comes from.

Most AI models have Context (Short-Term Memory). They remember everything in the current conversation perfectly. But the moment you close that tab, the “employee” gets amnesia.

To fix this, engineers are building Long-Term Memory systems. Think of this as a “Project Log” where the agent writes down important details (”User prefers short emails,” “Project X is due Tuesday”) and stores them in a database to retrieve later.

What it learns: Your preferences and ongoing project state.

The Reality: Unless your engineering team explicitly builds a “Memory Database,” your AI isn’t learning from your chats. It’s just reading the transcript of the current meeting.

4. The Coaching: System Prompting

The Analogy: The Manager’s Standing Orders (SOPs).

This is the most underrated form of “learning.” You can change an AI’s entire behavior just by writing a better job description.

By updating the System Prompt (the hidden instructions the AI sees first), you can say: “You are a senior auditor. Be skeptical. Never apologize. Always cite sources.”

What it learns: Behavior, tone, and rules of engagement.

The ROI: This is “In-Context Learning.” It costs almost nothing but yields the highest immediate improvement in quality.

The “Continuous Learning” Myth

There is a dangerous myth that AI models in production are “continuously learning” like a human intern—getting smarter with every interaction.

They are not. And you don’t want them to.

If an AI updated its “brain” (weights) based on every user chat, it would be a disaster.

Data Poisoning: Trolls could teach it hate speech.

Catastrophic Forgetting: Learning a new sales process might make it forget how to write SQL.

In the enterprise, “Continuous Improvement” doesn’t mean the brain gets bigger. It means the Handbook (Data) gets updated and the Coaching (Prompts) gets refined.

Choosing the Right Tool for the Job

So, which lever should you pull when your digital employee needs to “learn” something new? The answer usually isn’t the one most people reach for first.

Start by asking if you are trying to teach a fundamental skill or just share a fact. If you need your AI to master something structurally new—like translating a dead language or coding in a proprietary mainframe syntax—then you are looking at Training or Fine-Tuning. This is the “University Degree.” It’s expensive, slow, and risky. I’ve seen teams burn six figures retraining a model to “learn” a product catalog when a simple database lookup would have worked perfectly. Treat this as a last resort.

For almost everything else—new sales figures, updated policies, or changing market data—you want RAG (The Handbook). It’s cheap, instant, and hallucinates far less because it cites its sources. If your pricing changes tomorrow, you don’t need a data scientist; you just need to upload the new PDF. This is where 90% of enterprise “learning” should live.

But what if the problem isn’t facts, but context? If you want the AI to remember that your boss hates long emails or that “Project Apollo” is due on Tuesday, you need Memory. This is the “Sticky Note” on the monitor. It requires some engineering effort to build, but it’s the only way to stop your users from screaming, “I already told you that!” without rebuilding the model from scratch.

Finally, if the AI knows the facts but the vibe is off—it’s too chatty, too apologetic, or not skeptical enough—you don’t need data; you need Coaching (System Prompting). This is the fastest lever you have. Rewrite the job description (the prompt), and the personality shifts overnight. It’s usually free, and it solves behavioral issues that data never will.

The 100x Move

Stop trying to “train” your models. It’s like performing brain surgery to teach someone a phone number.

Instead, focus on Curating your Handbook. In the agentic era, your competitive advantage isn’t the intelligence of the model—it’s the accessibility of your data. A genius AI with a messy filing cabinet is useless. An average AI with a perfect handbook is unstoppable. Don’t send your digital employee to college; just give them better documentation.

What’s the most frustrating “But I taught it!” moment you’ve had with AI? Share below.