Frameworks, Platforms, or Raw Code? The Build-vs-Buy Decision Matrix

The Architecture Is Approved. The VP of Engineering Asks: "So What's Our Tech Stack?" The Answer Depends on Where You Are Today And How Fast the Landscape Under Your Feet Is Shifting.

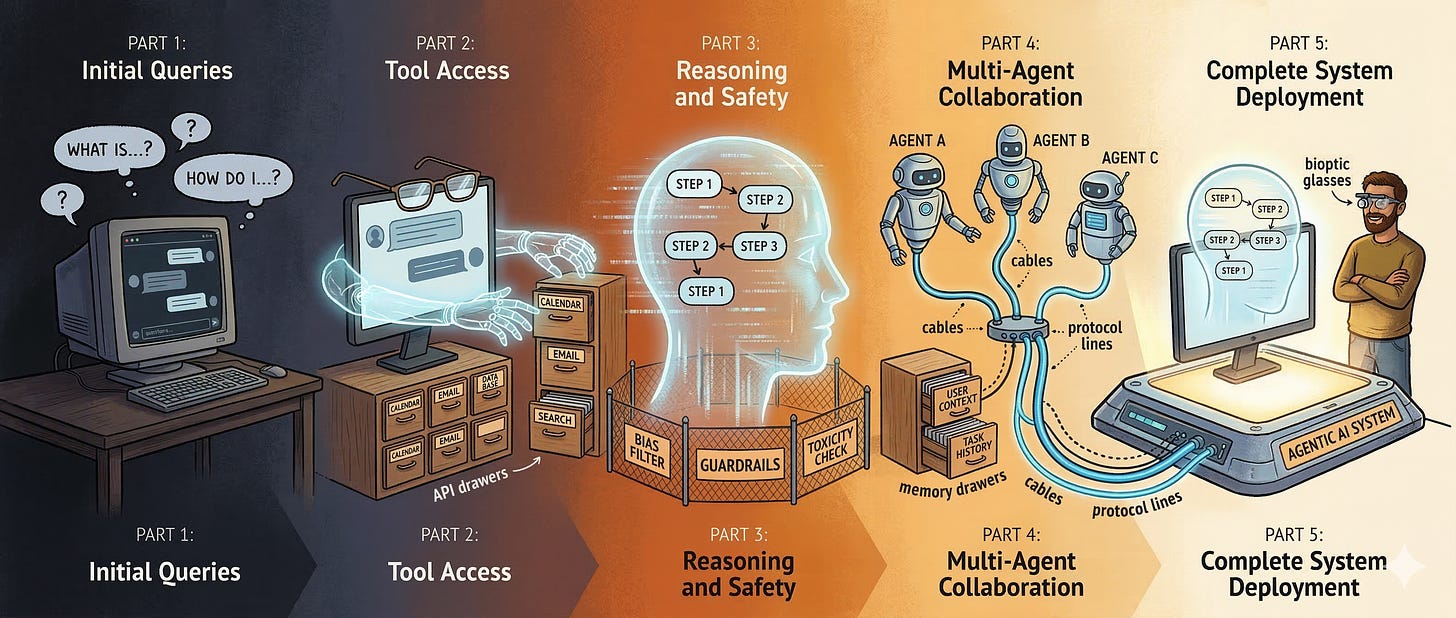

This is Part 5 of The Architecture of Agency, a 5-part series translating Agentic AI jargon into the software architecture paradigms you already know.

We’ve spent four articles building an agentic AI architecture from first principles. We started with a naive chatbot that hallucinated refund policies (Part 1). We gave it glasses and hands RAG, tools, structured outputs (Part 2). We taught it to think step by step and built the guardrails and observability to trust its reasoning (Part 3). We connected it to a multi-agent ecosystem with standardized protocols, memory, and identity (Part 4).

The architecture diagram is on the whiteboard. The VP of Customer Experience is sold. The security team has signed off on the identity model. The CFO has approved the budget.

Then the VP of Engineering walks up to the whiteboard, uncaps a red marker, and writes the question that every technical leader eventually asks:

“Now... how do we build it?”

This is the moment where architecture meets reality. And the honest answer the one most conference talks and vendor pitches won’t give you is: it depends, and the right answer today might be the wrong answer in six months.

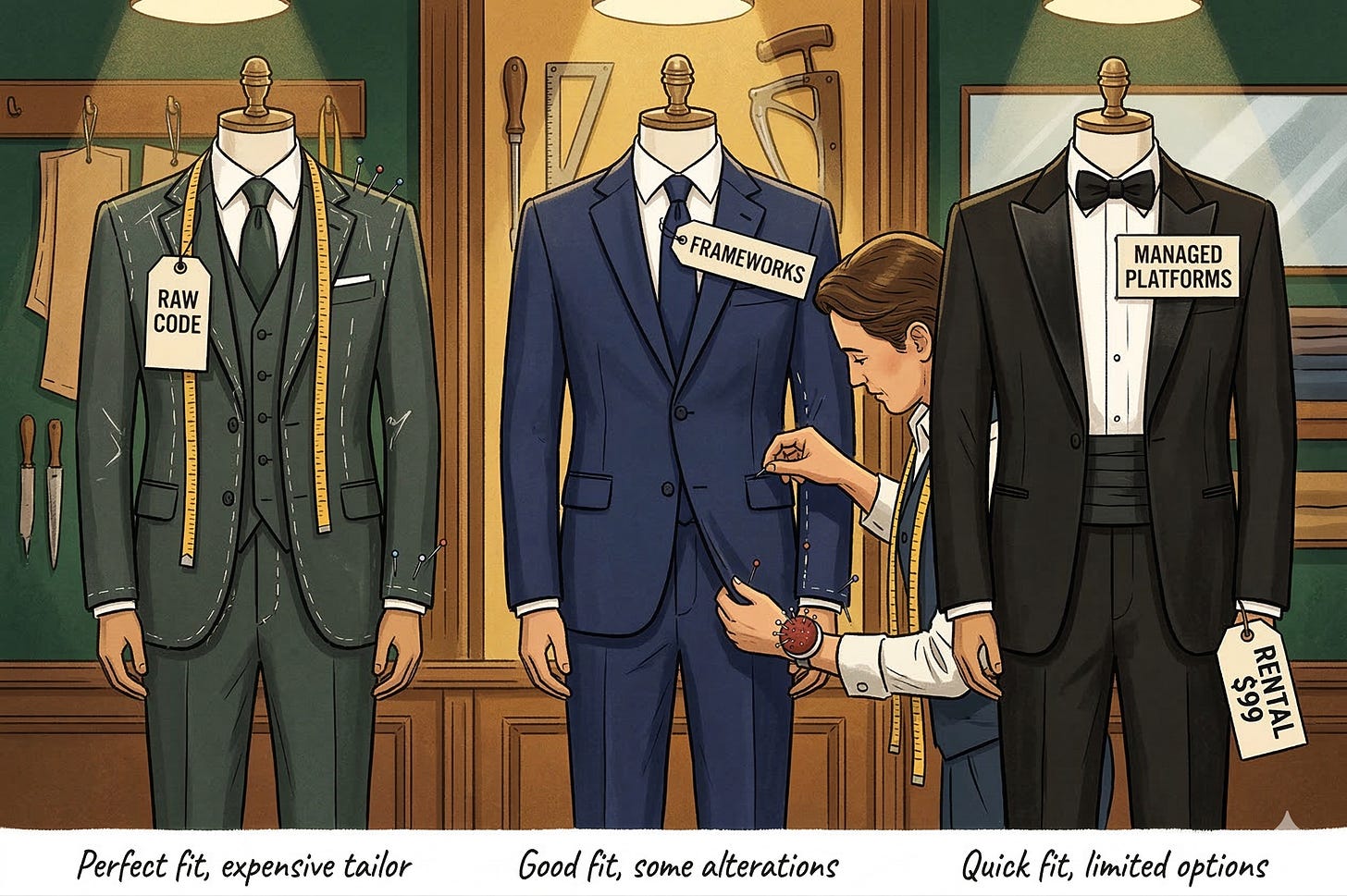

The Three Paths

There are fundamentally three approaches to building an agentic AI system in 2026, and they map to a spectrum of control versus convenience that every engineer has navigated before.

Path 1: Raw Code. You write the orchestration loop, the tool integrations, the memory management, and the guardrails yourself using the model provider’s API directly. Maximum control. Maximum effort. Maximum risk that you’re rebuilding what someone else has already solved.

Path 2: Orchestration Frameworks. You use a framework like LangGraph, the OpenAI Agents SDK, or the Microsoft Agent Framework to handle the plumbing the ReAct loops, tool routing, state management while you focus on the business logic. Moderate control. Moderate effort. Moderate risk of framework lock-in.

Path 3: Managed Platforms. You deploy on a fully managed platform like Amazon Bedrock AgentCore, Azure AI Agent Service, or Vertex AI Agent Builder that handles infrastructure, scaling, and many of the architectural patterns we’ve discussed in this series. Minimum effort. Minimum control. Maximum risk of vendor lock-in.

Each path has legitimate use cases. The mistake is treating the decision as ideological (”real engineers write their own code”) rather than strategic (”which path minimizes our total risk given our team, timeline, and the pace of change in this ecosystem?”).

Let’s walk through each one.

Path 1: Raw Code The Custom Suit

Writing your agentic system from scratch using direct API calls gives you complete control over every aspect of the architecture. You decide how the ReAct loop works. You decide how context is managed. You decide how tools are invoked and results are processed. Nothing is hidden behind an abstraction you didn’t choose.

When this makes sense: You have a highly specialized use case that doesn’t fit standard patterns. Your team has deep LLM engineering expertise. You need to squeeze every last token out of your budget, and framework overhead is unacceptable. Your compliance requirements demand that you can explain and audit every line of code in the system.

When this is a trap: You’re a team of four trying to ship by end of quarter. You spend three weeks building a retry mechanism for tool calls that LangGraph gives you for free. You ship, and then spend the next three months maintaining infrastructure instead of improving the agent.

The raw code path is a custom suit. It fits perfectly if you can afford the tailor and if your body doesn’t change shape. In a landscape that’s evolving as fast as agentic AI, that’s a significant “if.”

Token Caching and Cost Optimization

One area where raw code shines is cost optimization, because you have granular control over every API call.

Token costs are the operational expense that catches most teams off guard. Remember the Manus statistic from Part 1: a 100:1 input-to-output token ratio. For every token your agent generates, it processes a hundred tokens of context. At scale, this adds up fast.

Token caching (sometimes called prompt caching) is a technique where you cache the model’s processed representation of static context your system prompt, standard documents, tool definitions so you don’t pay to re-process them on every request. Anthropic, OpenAI, and Google all offer variants of this. The savings can be dramatic: 60-90% reduction in input token costs for conversations that share common prefixes.

The raw code path gives you direct control over caching strategies. You decide exactly what gets cached, when caches are invalidated, and how cached context interacts with dynamic content. In a framework or platform, you’re at the mercy of the abstraction’s caching decisions which may or may not align with your cost profile.

Other cost levers include aggressive context compression (from Part 1), smart chunking strategies for RAG (from Part 2), and loop guardrails that cap token spend per interaction (from Part 3). The theme is consistent: cost optimization in agentic systems is not an afterthought. It’s an architectural concern that touches every layer of the stack.

Path 2: Orchestration Frameworks The Off-the-Rack Suit with Alterations

Frameworks sit in the middle of the spectrum. They provide the structural patterns loops, tool routing, state management, memory while leaving the business logic, model choice, and deployment infrastructure to you.

LangGraph

LangGraph (from the LangChain team) models your agent as a state machine a directed graph where nodes are processing steps and edges are transitions. If you’ve ever built a workflow engine or a finite state machine, this is immediately familiar.

The ReAct loop from Part 3 becomes a cycle in the graph: a “reasoning” node connects to a “tool execution” node, which connects to an “observation” node, which loops back to “reasoning” until a “done” condition routes to the output. Guardrails are nodes. Routing decisions are conditional edges. The entire agent is a visual, debuggable graph.

LangGraph’s strength is explicitness. Every state transition is defined. Every branch is visible. You can serialize the agent’s state at any point, resume it later, or hand it off to another process. For teams that value auditability and control which, after Parts 3 and 4, should be all of you this transparency is invaluable.

The trade-off is verbosity. Simple agents require more boilerplate than a raw API call. And LangGraph inherits LangChain’s ecosystem, which some developers find over-abstracted. If you don’t need the graph model, you’re paying a complexity tax for structure you aren’t using.

OpenAI Agents SDK

OpenAI’s Agents SDK takes a different philosophy. Where LangGraph gives you a graph to fill in, the Agents SDK gives you primitives Agent, Tool, Handoff, Guardrail and lets you compose them with minimal ceremony. An agent is defined in a few lines: a model, a system prompt, a list of tools, and optional handoff targets.

The SDK is opinionated about the happy path. Tool calling, structured outputs, and multi-agent handoffs work out of the box. If your use case fits the patterns the SDK supports, you’ll move fast. If you need something the SDK doesn’t support, you’ll fight the abstractions.

OpenAI positions this as model-agnostic (it works with other providers), but the ergonomics are optimized for OpenAI models. That’s not necessarily a dealbreaker, but it’s a factor in your vendor diversification strategy.

Microsoft Agent Framework (Formerly Semantic Kernel)

Microsoft’s approach is the most enterprise-flavored. The Agent Framework integrates tightly with Azure services, supports multi-agent orchestration out of the box, and is designed for teams that are already deep in the Microsoft ecosystem Azure AD for identity, Azure AI Search for RAG, Azure Monitor for observability.

If your enterprise runs on Microsoft, this framework turns the architecture from Parts 1-4 into a set of Azure service configurations rather than custom code. The identity and security model from Part 4, in particular, maps almost directly to Azure AD service principals and managed identities.

The trade-off is lock-in. The deeper you go into the Microsoft Agent Framework, the harder it is to move to a different cloud provider. For some enterprises, that’s an acceptable trade-off they’re already locked in. For others, it’s a strategic risk.

The Bioptic Lens

Choosing a framework is like choosing assistive technology.

When I started coding with low vision, I tried to do everything with one tool a screen magnifier. It worked, but I was forcing a single tool to handle problems it wasn’t designed for. Reading code? Great. Navigating file trees? Painful. Reviewing pull requests with inline comments? Nearly impossible.

Over time, I built a toolkit. Magnifier for reading code. VoiceOver for navigating complex UIs. High-contrast themes for reducing eye strain. IDE extensions for code navigation. Each tool has strengths and weaknesses, and the art is knowing which tool to reach for in which context.

Frameworks are the same. LangGraph excels when you need a transparent, auditable state machine. The OpenAI SDK excels when you want to ship fast with minimal boilerplate. The Microsoft Framework excels when your enterprise already lives in Azure. The mistake isn’t picking one it’s believing one framework will solve every problem, or that the choice is permanent.

A Note on the Framework Churn Problem

Here’s the uncomfortable reality of this space in 2026: frameworks are evolving faster than production systems can adopt them. LangChain went from version 0.1 to 0.3 in under a year, with breaking API changes between each version. OpenAI launched the Agents SDK in early 2025, significantly refactored it by mid-2025, and continues to evolve it. Microsoft has rebranded and restructured their agent tooling multiple times.

This isn’t a criticism it’s the natural consequence of a field that’s still discovering its own best practices. But it has real implications for your architecture. If you build tightly against LangGraph’s API surface today, and LangGraph releases a fundamentally different state management model in six months, you’re facing a migration.

The defense against framework churn is the same defense against any dependency risk: isolate the framework at the boundary. Your business logic the reasoning chains, the guardrail rules, the memory retrieval strategies should be framework-agnostic. The framework handles orchestration plumbing. Your code handles the decisions. If you need to swap from LangGraph to the OpenAI SDK, you rewrite the orchestration layer, not the business logic.

This is Dependency Inversion 101, applied to AI. Your agents should depend on abstractions (tool interfaces, memory interfaces, routing interfaces), not on specific framework implementations. The teams that do this well can ride framework upgrades as improvements rather than experiencing them as crises.

Path 3: Managed Platforms The Rental Tux

Managed platforms take the framework concept to its logical conclusion: the vendor handles not just the abstractions, but the infrastructure, scaling, and operational overhead.

Amazon Bedrock AgentCore lets you define agents, tools, and knowledge bases through configuration. You bring your documents, define your tools, and Bedrock handles RAG pipeline management, tool orchestration, and session memory. It supports multiple foundation models and integrates with the broader AWS ecosystem.

Azure AI Agent Service provides similar capabilities within the Azure ecosystem. It’s essentially the Microsoft Agent Framework deployed as a managed service, with built-in connections to Azure AI Search, Azure OpenAI, and Azure Monitor.

Google Vertex AI Agent Builder emphasizes enterprise search and grounding, with strong integration with Google Workspace data sources. If your organization’s knowledge lives in Google Drive, Gmail, and Google Docs, Vertex’s grounding capabilities are particularly compelling.

When managed platforms make sense: You need to go from zero to production agent in weeks, not months. You don’t have (or don’t want to build) a dedicated AI platform team. Your use case fits the patterns the platform supports. You’re already committed to the vendor’s cloud ecosystem.

When managed platforms are a trap: Your use case requires custom orchestration logic that the platform doesn’t support. You need to switch models frequently as the landscape evolves (platform abstractions can make model-swapping harder, not easier). You need deep cost optimization that requires control over caching, batching, and context management at a level the platform doesn’t expose.

The Decision Matrix

Here’s the framework I use when advising teams on this decision. It’s not a flowchart it’s a set of questions whose answers point toward a path.

How specialized is your use case? If your agent follows patterns that thousands of other companies also need (customer support, document Q&A, data analysis), a managed platform or framework will get you there faster. If you’re building something genuinely novel an agent that navigates a proprietary workflow no vendor has templated raw code gives you the flexibility you need.

How fast is your landscape shifting? This is the question most teams underweight. In March 2026, the agentic AI ecosystem is evolving monthly. New protocols emerge. New models ship. New patterns become best practices while old ones become antipatterns. If you’ve over-committed to a specific framework or platform, every paradigm shift becomes a migration project. Raw code is more work to build but easier to evolve. Frameworks and platforms are faster to ship but harder to pivot.

What’s your team’s AI engineering depth? A team of experienced ML engineers can be productive with raw code. A team of strong software engineers who are new to AI will benefit from a framework’s guardrails and patterns. A team with limited engineering capacity should start with a managed platform and graduate to a framework as their expertise grows.

How important is vendor diversification? If you need the ability to swap models running Claude for some tasks and GPT-4 for others, or switching providers entirely as pricing and capability evolve raw code and model-agnostic frameworks give you that flexibility. Managed platforms make it harder, by design.

What’s your observability requirement? If you need the deep tracing and auditability we discussed in Part 3, verify that your chosen framework or platform supports it natively. Some platforms provide black-box observability (request in, response out) without the reasoning-trace-level visibility that production agent systems require.

The Hybrid Path: Where Most Teams Actually Land

In practice, most production teams don’t pick one path exclusively. They run a hybrid.

The pattern I see most often: a managed platform for simple, high-volume use cases (document Q&A, basic support triage) combined with a framework-based system for complex, multi-agent workflows. The managed platform handles the 80% of interactions that follow predictable patterns. The custom framework handles the 20% that require sophisticated reasoning, multi-step tool use, or cross-agent coordination.

This maps back to the workflow-versus-autonomy spectrum from Part 2. The managed platform is your workflow layer deterministic, reliable, cost-effective. The custom framework is your autonomy layer flexible, powerful, expensive. Both exist in the same architecture, handling different tiers of complexity.

At Acme Corp, this might look like: Bedrock AgentCore handles the simple “What’s my order status?” queries high volume, predictable pattern, no custom logic needed. A LangGraph-based system handles the complex escalation cases multi-turn reasoning, cross-system tool calls, human-in-the-loop approvals. A shared MCP layer (from Part 4) provides both systems with access to the same tools, so upgrading a tool integration benefits both tiers simultaneously.

The Uncomfortable Truth

Here’s what I wish someone had told me before I started building agentic systems: the right answer changes.

The framework that’s perfect for your MVP might be the bottleneck that prevents your v2. The managed platform that saves your team six months of buildout might charge you ten times what raw code would cost at scale. The raw code approach that gives you maximum control might leave you maintaining infrastructure while your competitors ship features.

The 100x move isn’t picking the “best” option. It’s designing for change. Isolate your business logic from your orchestration layer. Define clean interfaces between your agents, tools, and memory systems. Use the patterns from Parts 1-4 MCP for tool connections, structured outputs for inter-component communication, traces for debugging to create seams in your architecture where you can swap one implementation for another without rebuilding the whole system.

This is, once again, a lesson I learned from accessibility before I learned it from AI.

The Bioptic Lens

My assistive technology stack has changed dramatically over thirteen years. Screen magnifiers have come and gone. Screen readers have evolved. Operating systems have overhauled their accessibility APIs. The only constant is that I need to see code, navigate interfaces, and produce software.

The developers who thrived through these transitions were the ones who had a clear mental model of what they needed to accomplish that was independent of how any specific tool accomplished it. They could switch from one magnifier to another because they understood the principles contrast, zoom level, focus tracking not just the keybindings.

Build your agent architecture the same way. Understand the principles context engineering, tool integration, reasoning chains, guardrails, memory, identity. Then choose the tools that implement those principles for your current situation. When the tools change and they will the principles carry forward.

Series Conclusion: The Architecture of Agency

We’ve traveled a long road across these five parts.

We started with a chatbot that couldn’t see past its own context window a myopic prediction engine that hallucinated with confidence. We diagnosed the problem as architectural, not intellectual: the model wasn’t stupid, it was blind.

We gave it vision through RAG and hands through tools. We taught it to think with Chain of Thought and to act responsibly with guardrails. We connected it to the broader enterprise through standardized protocols and gave it memory so it could learn from experience. And now we’ve mapped the landscape of frameworks and platforms that can bring this architecture to life.

Through every part, the bioptic lens has held. The same principles that make software accessible to a developer with 20/150 vision clear context, explicit reasoning, standardized interfaces, managed limitations, and tools that extend rather than replace human capability are the same principles that make agentic AI systems reliable, auditable, and safe.

An agent isn’t a magical digital worker. It’s an engineered system with a myopic engine at its core, surrounded by layers of architecture that compensate for its limitations and amplify its strengths. The quality of that architecture not the intelligence of the model is what separates a demo from a product.

Build accordingly.